Review:

The Box, by Marc Levinson

| Publisher: |

Princeton University Press |

| Copyright: |

2006, 2008 |

| Printing: |

2008 |

| ISBN: |

0-691-13640-8 |

| Format: |

Trade paperback |

| Pages: |

278 |

The shipping container as we know it is only about 65 years old. Shipping

things in containers is obviously much older; we've been doing that for

longer than we've had ships. But the standardized metal box, set on a

rail car or loaded with hundreds of its indistinguishable siblings into an

enormous, specially-designed cargo ship, became economically significant

only recently. Today it is one of the oft-overlooked foundations of

global supply chains. The startlingly low cost of container shipping is

part of why so much of what US consumers buy comes from Asia, and why most

complex machinery is assembled in multiple countries from parts gathered

from a dizzying variety of sources.

Marc Levinson's

The Box is a history of container shipping, from

its (arguable) beginnings in the trailer bodies loaded on Pan-Atlantic

Steamship Corporation's

Ideal-X in 1956 to just-in-time

international supply chains in the 2000s. It's a popular history that

falls on the academic side, with a full index and 60 pages of citations

and other notes. (Per my normal convention, those pages aren't included

in the sidebar page count.)

The Box is organized mostly

chronologically, but Levinson takes extended detours into labor relations

and container standardization at the appropriate points in the timeline.

The book is very US-centric. Asian, European, and Australian shipping is

discussed mostly in relation to trade with the US, and Africa is barely

mentioned. I don't have the background to know whether this is

historically correct for container shipping or is an artifact of

Levinson's focus.

Many single-item popular histories focus on something that involves

obvious technological innovation (

paint

pigments) or deep cultural resonance (

salt)

or at least entertaining quirkiness (

punctuation marks,

resignation letters).

Shipping containers are important but simple and boring. The least

interesting chapter in

The Box covers container standardization, in

which a whole bunch of people had boring meetings, wrote some things done,

discovered many of the things they wrote down were dumb, wrote more things

down, met with different people to have more meetings, published a

standard that partly reflected the fixations of that one guy who is always

involved in standards discussions, and then saw that standard be promptly

ignored by the major market players.

You may be wondering if that describes the whole book. It doesn't, but

not because of the shipping containers.

The Box is interesting

because the process of economic change is interesting, and container

shipping is almost entirely about business processes rather than

technology.

Levinson starts the substance of the book with a description of shipping

before standardized containers. This was the most effective, and probably

the most informative, chapter. Beyond some vague ideas picked up via

cultural osmosis, I had no idea how cargo shipping worked. Levinson gives

the reader a memorable feel for the sheer amount of physical labor

involved in loading and unloading a ship with mixed cargo (what's called

"breakbulk" cargo to distinguish it from bulk cargo like coal or wheat

that fills an entire hold). It's not just the effort of hauling barrels,

bales, or boxes with cranes or raw muscle power, although that is

significant. It's also the need to touch every piece of cargo to move it,

inventory it, warehouse it, and then load it on a truck or train.

The idea of container shipping is widely attributed, including by

Levinson, to Malcom McLean, a trucking magnate who became obsessed with

the idea of what we now call intermodal transport: using the same

container for goods on ships, railroads, and trucks so that the contents

don't have to be unpacked and repacked at each transfer point. Levinson

uses his career as an anchor for the story, from his acquisition of

Pan-American Steamship Corporation to pursue his original idea (backed by

private equity and debt, in a very modern twist), through his years

running Sea-Land as the first successful major container shipper, and

culminating in his disastrous attempted return to shipping by acquiring

United States Lines.

I am dubious of Great Man narratives in history books, and I think

Levinson may be overselling McLean's role. Container shipping was an

obvious idea that the industry had been talking about for decades. Even

Levinson admits that, despite a few gestures at giving McLean central

credit. Everyone involved in shipping understood that cargo handling was

the most expensive and time-consuming part, and that if one could minimize

cargo handling at the docks by loading and unloading full containers that

didn't have to be opened, shipping costs would be much lower (and profits

higher). The idea wasn't the hard part. McLean was the first person to

pull it off at scale, thanks to some audacious economic risks and a

willingness to throw sharp elbows and play politics, but it seems likely

that someone else would have played that role if McLean hadn't existed.

Container shipping didn't happen earlier because achieving that cost

savings required a huge expenditure of capital and a major disruption of a

transportation industry that wasn't interested in being disrupted. The

ships had to be remodeled and eventually replaced; manufacturing had to

change; railroad and trucking in theory had to change (in practice,

intermodal transport; McLean's obsession, didn't happen at scale until

much later); pricing had to be entirely reworked; logistical tracking of

goods had to be done much differently; and significant amounts of

extremely expensive equipment to load and unload heavy containers had to

be designed, built, and installed. McLean's efforts proved the cost

savings was real and compelling, but it still took two decades before the

shipping industry reconstructed itself around containers.

That interim period is where this history becomes a labor story, and

that's where Levinson's biases become somewhat distracting.

In the United States, loading and unloading of cargo ships was done by

unionized longshoremen through a bizarre but complex and long-standing

system of contract hiring. The cost savings of container shipping comes

almost completely from the loss of work for longshoremen. It's a classic

replacement of labor with capital; the work done by gangs of twenty or

more longshoreman is instead done by a single crane operator at much

higher speed and efficiency. The longshoreman unions therefore opposed

containerization and launched numerous strikes and other labor actions to

delay use of containers, force continued hiring that containers made

unnecessary, or win buyouts and payoffs for current longshoremen.

Levinson is trying to write a neutral history and occasionally shows some

sympathy for longshoremen, but they still get the Luddite treatment in

this book: the doomed reactionaries holding back progress. Longshoremen

had a vigorous and powerful union that won better working conditions

structured in ways that look absurd to outsiders, such as requiring that

ships hire twice as many men as necessary so that half of them could get

paid while not working. The unions also had a reputation for corruption

that Levinson stresses constantly, and theft of breakbulk cargo during

loading and warehousing was common. One of the interesting selling points

for containers was that lossage from theft during shipping apparently

decreased dramatically.

It's obvious that the surface demand of the longshoremen unions, that

either containers not be used or that just as many manual laborers be

hired for container shipping as for earlier breakbulk shipping, was

impossible, and that the profession as it existed in the 1950s was doomed.

But beneath those facts, and the smoke screen of Levinson's obvious

distaste for their unions, is a real question about what society owes

workers whose jobs are eliminated by major shifts in business practices.

That question of fairness becomes more pointed when one realizes that this

shift was

massively subsidized by US federal and local governments.

McLean's Sea-Land benefited from direct government funding and subsidized

navy surplus ships, massive port construction in New Jersey with public

funds, and a sweetheart logistics contract from the US military to supply

troops fighting the Vietnam War that was so generous that the return

voyage was free and every container Sea-Land picked up from Japanese ports

was pure profit. The US shipping industry was heavily

government-supported, particularly in the early days when the labor

conflicts were starting.

Levinson notes all of this, but never draws the contrast between the

massive support for shipping corporations and the complete lack of formal

support for longshoremen. There are hard ethical questions about what

society owes displaced workers even in a pure capitalist industry

transformation, and this was very far from pure capitalism. The US

government bankrolled large parts of the growth of container shipping, but

the only way that longshoremen could get part of that money was through

strikes to force payouts from private shipping companies.

There are interesting questions of social and ethical history here that

would require careful disentangling of the tendency of any group to oppose

disruptive change and fairness questions of who gets government support

and who doesn't. They will have to wait for another book; Levinson never

mentions them.

There were some things about this book that annoyed me, but overall it's a

solid work of popular history and deserves its fame. Levinson's account

is easy to follow, specific without being tedious, and backed by

voluminous notes. It's not the most compelling story on its own merits;

you have to have some interest in logistics and economics to justify

reading the entire saga. But it's the sort of history that gives one a

sense of the fractal complexity of any area of human endeavor, and I

usually find those worth reading.

Recommended if you like this sort of thing.

Rating: 7 out of 10

After seven years of service as member and secretary on the GHC Steering Committee, I have resigned from that role. So this is a good time to look back and retrace the formation of the GHC proposal process and committee.

In my memory, I helped define and shape the proposal process, optimizing it for effectiveness and throughput, but memory can be misleading, and judging from the paper trail in my email archives, this was indeed mostly Ben Gamari s and Richard Eisenberg s achievement: Already in Summer of 2016, Ben Gamari set up the

After seven years of service as member and secretary on the GHC Steering Committee, I have resigned from that role. So this is a good time to look back and retrace the formation of the GHC proposal process and committee.

In my memory, I helped define and shape the proposal process, optimizing it for effectiveness and throughput, but memory can be misleading, and judging from the paper trail in my email archives, this was indeed mostly Ben Gamari s and Richard Eisenberg s achievement: Already in Summer of 2016, Ben Gamari set up the

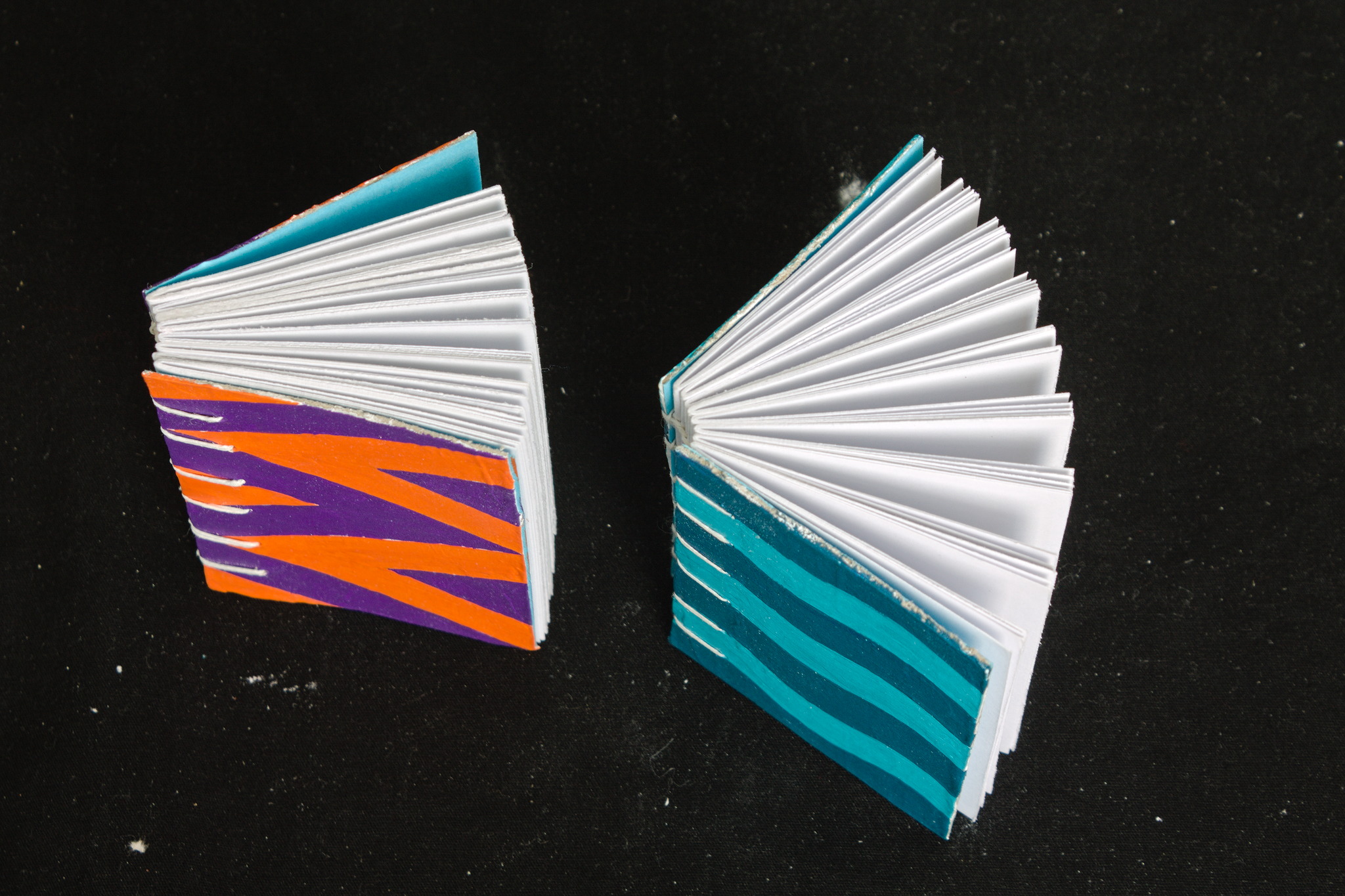

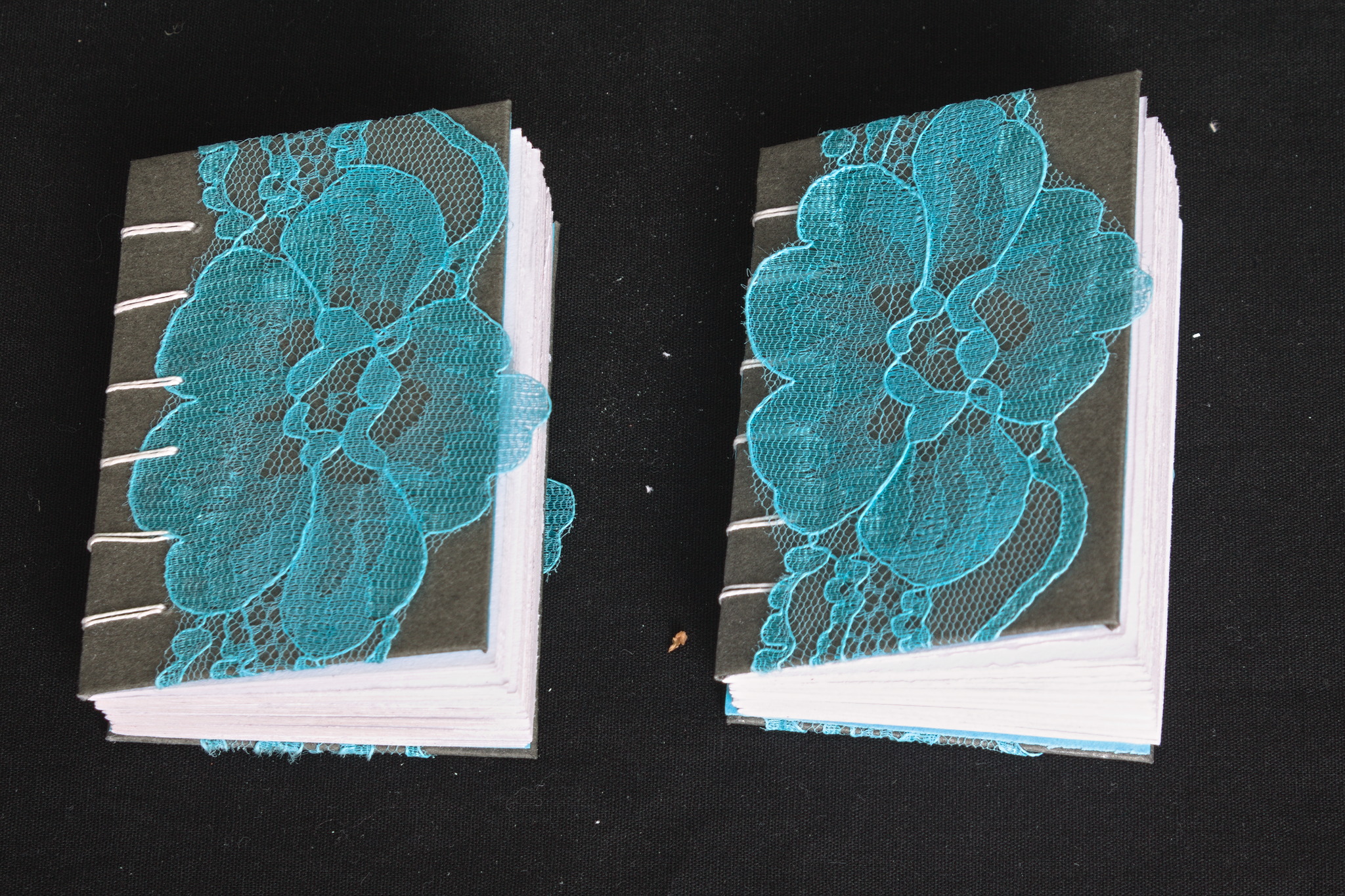

In 2022 I read a post on the fediverse by somebody who mentioned that

they had bought on a whim a cute tiny book years ago, and that it

had been a companion through hard times. Right now I can t find the

post, but it was pretty aaaaawwww.

In 2022 I read a post on the fediverse by somebody who mentioned that

they had bought on a whim a cute tiny book years ago, and that it

had been a companion through hard times. Right now I can t find the

post, but it was pretty aaaaawwww.

At the same time, I had discovered Coptic binding, and I wanted to do

some exercise to let my hands learn it, but apparently there is a limit

to the number of notebooks and sketchbooks a person needs (I m not 100%

sure I actually believe this, but I ve heard it is a thing).

At the same time, I had discovered Coptic binding, and I wanted to do

some exercise to let my hands learn it, but apparently there is a limit

to the number of notebooks and sketchbooks a person needs (I m not 100%

sure I actually believe this, but I ve heard it is a thing).

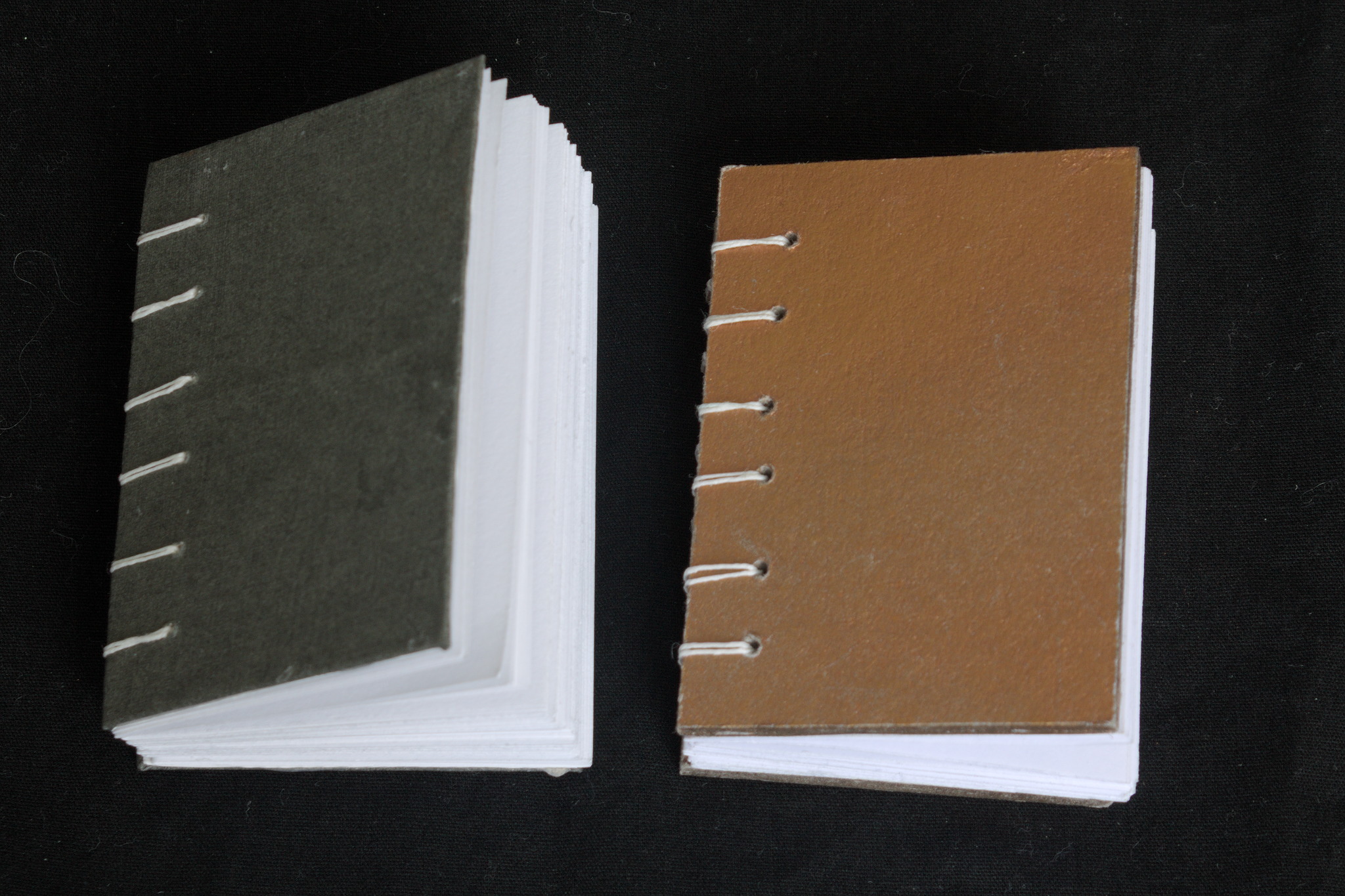

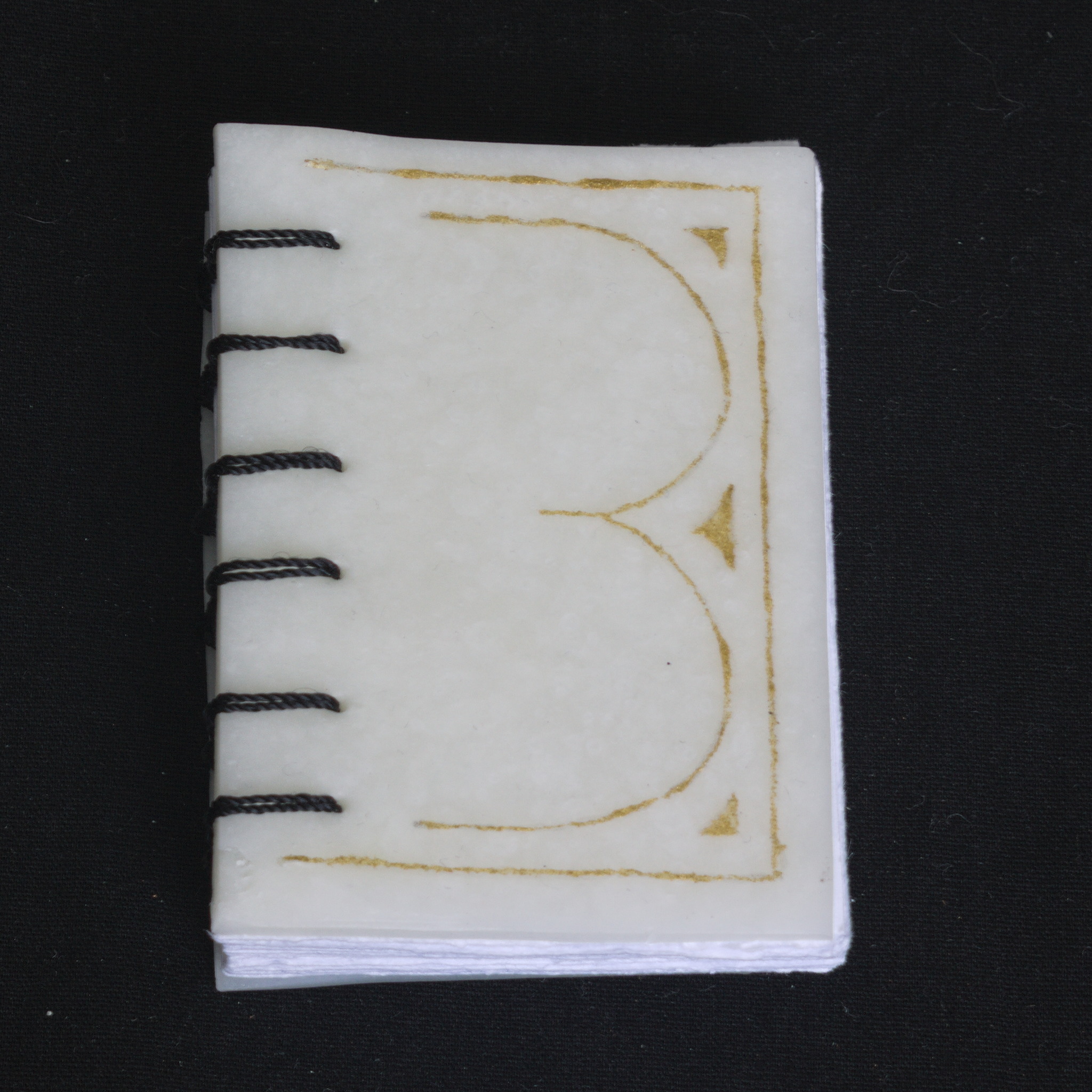

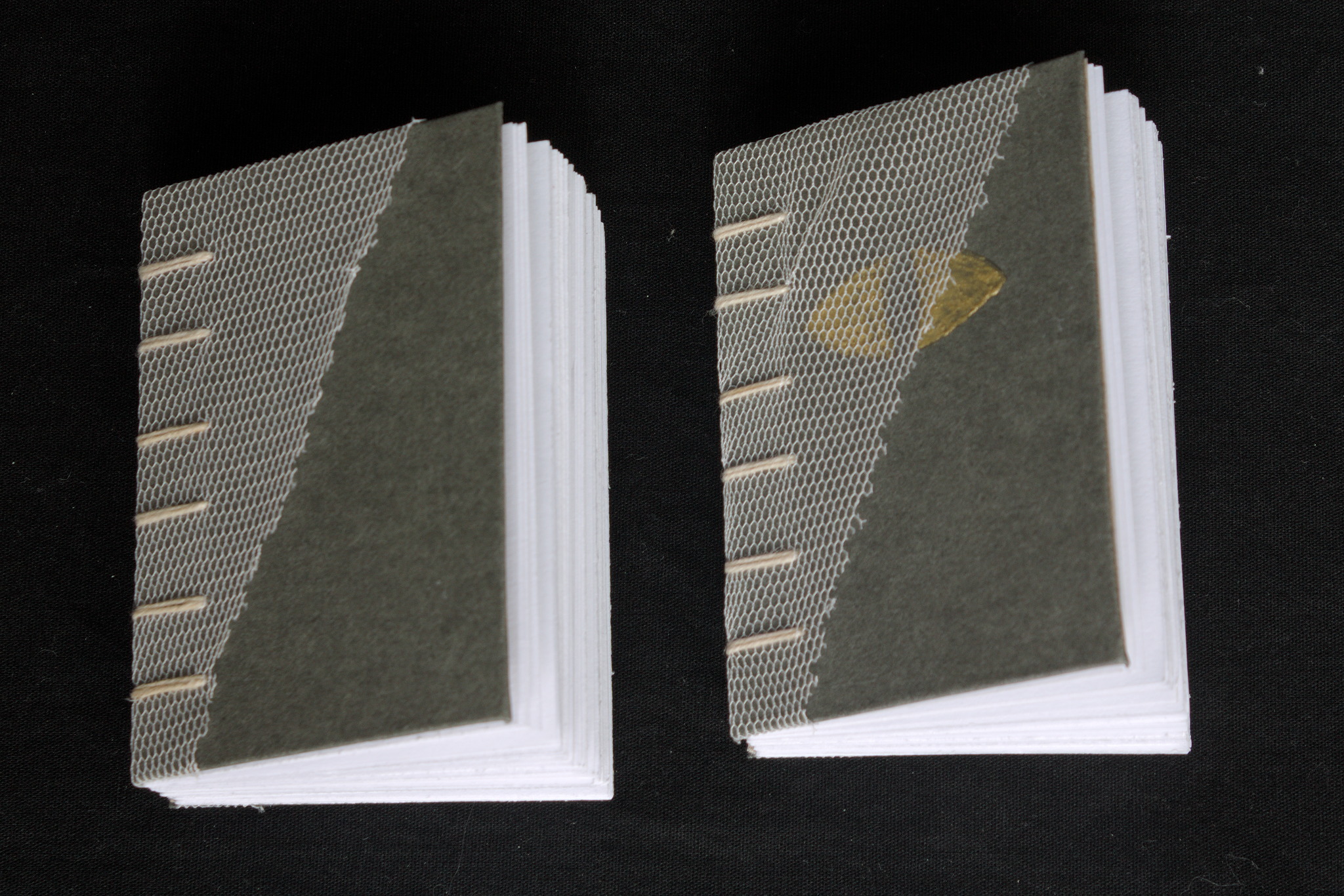

So I decided to start making minibooks with the intent to give them

away: I settled (mostly) on the A8 size, and used a combination of found

materials, leftovers from bigger projects and things I had in the Stash.

As for paper, I ve used a variety of the ones I have that are at the

very least good enough for non-problematic fountain pen inks.

So I decided to start making minibooks with the intent to give them

away: I settled (mostly) on the A8 size, and used a combination of found

materials, leftovers from bigger projects and things I had in the Stash.

As for paper, I ve used a variety of the ones I have that are at the

very least good enough for non-problematic fountain pen inks.

Thanks to the small size, and the way coptic binding works, I ve been

able to play around with the covers, experimenting with different styles

beyond the classic bookbinding cloth / paper covered cardboard,

including adding lace, covering food box cardboard with gesso and

decorating it with acrylic paints, embossing designs by gluing together

two layers of cardboard, one of which has holes, making covers

completely out of cernit, etc. Some of these I will probably also use in

future full-scale projects, but it s nice to find out what works and

what doesn t on a small scale.

Thanks to the small size, and the way coptic binding works, I ve been

able to play around with the covers, experimenting with different styles

beyond the classic bookbinding cloth / paper covered cardboard,

including adding lace, covering food box cardboard with gesso and

decorating it with acrylic paints, embossing designs by gluing together

two layers of cardboard, one of which has holes, making covers

completely out of cernit, etc. Some of these I will probably also use in

future full-scale projects, but it s nice to find out what works and

what doesn t on a small scale.

Now, after a year of sporadically making these I have to say that the

making went quite well: I enjoyed the making and the creativity in

making different covers. The giving away was a bit more problematic, as

I didn t really have a lot of chances to do so, so I believe I still

have most of them. In 2024 I ll try to look for more opportunities (and

if you live nearby and want one or a few feel free to ask!)

Now, after a year of sporadically making these I have to say that the

making went quite well: I enjoyed the making and the creativity in

making different covers. The giving away was a bit more problematic, as

I didn t really have a lot of chances to do so, so I believe I still

have most of them. In 2024 I ll try to look for more opportunities (and

if you live nearby and want one or a few feel free to ask!)

I think while developing Wayland-as-an-ecosystem we are now entrenched into narrow concepts of how a desktop should work. While discussing Wayland protocol additions, a lot of concepts clash, people from different desktops with different design philosophies debate the merits of those over and over again never reaching any conclusion (just as you will never get an answer out of humans whether sushi or pizza is the clearly superior food, or whether CSD or SSD is better). Some people want to use Wayland as a vehicle to force applications to submit to their desktop s design philosophies, others prefer the smallest and leanest protocol possible, other developers want the most elegant behavior possible. To be clear, I think those are all very valid approaches.

But this also creates problems: By switching to Wayland compositors, we are already forcing a lot of porting work onto toolkit developers and application developers. This is annoying, but just work that has to be done. It becomes frustrating though if Wayland provides toolkits with absolutely no way to reach their goal in any reasonable way. For Nate s Photoshop analogy: Of course Linux does not break Photoshop, it is Adobe s responsibility to port it. But what if Linux was missing a crucial syscall that Photoshop needed for proper functionality and Adobe couldn t port it without that? In that case it becomes much less clear on who is to blame for Photoshop not being available.

A lot of Wayland protocol work is focused on the environment and design, while applications and work to port them often is considered less. I think this happens because the overlap between application developers and developers of the desktop environments is not necessarily large, and the overlap with people willing to engage with Wayland upstream is even smaller. The combination of Windows developers porting apps to Linux and having involvement with toolkits or Wayland is pretty much nonexistent. So they have less of a voice.

I think while developing Wayland-as-an-ecosystem we are now entrenched into narrow concepts of how a desktop should work. While discussing Wayland protocol additions, a lot of concepts clash, people from different desktops with different design philosophies debate the merits of those over and over again never reaching any conclusion (just as you will never get an answer out of humans whether sushi or pizza is the clearly superior food, or whether CSD or SSD is better). Some people want to use Wayland as a vehicle to force applications to submit to their desktop s design philosophies, others prefer the smallest and leanest protocol possible, other developers want the most elegant behavior possible. To be clear, I think those are all very valid approaches.

But this also creates problems: By switching to Wayland compositors, we are already forcing a lot of porting work onto toolkit developers and application developers. This is annoying, but just work that has to be done. It becomes frustrating though if Wayland provides toolkits with absolutely no way to reach their goal in any reasonable way. For Nate s Photoshop analogy: Of course Linux does not break Photoshop, it is Adobe s responsibility to port it. But what if Linux was missing a crucial syscall that Photoshop needed for proper functionality and Adobe couldn t port it without that? In that case it becomes much less clear on who is to blame for Photoshop not being available.

A lot of Wayland protocol work is focused on the environment and design, while applications and work to port them often is considered less. I think this happens because the overlap between application developers and developers of the desktop environments is not necessarily large, and the overlap with people willing to engage with Wayland upstream is even smaller. The combination of Windows developers porting apps to Linux and having involvement with toolkits or Wayland is pretty much nonexistent. So they have less of a voice.

I will also bring my two protocol MRs to their conclusion for sure, because as application developers we need clarity on what the platform (either all desktops or even just a few) supports and will or will not support in future. And the only way to get something good done is by contribution and friendly discussion.

I will also bring my two protocol MRs to their conclusion for sure, because as application developers we need clarity on what the platform (either all desktops or even just a few) supports and will or will not support in future. And the only way to get something good done is by contribution and friendly discussion.

The Rcpp Core Team is once again thrilled to announce a new release

1.0.12 of the

The Rcpp Core Team is once again thrilled to announce a new release

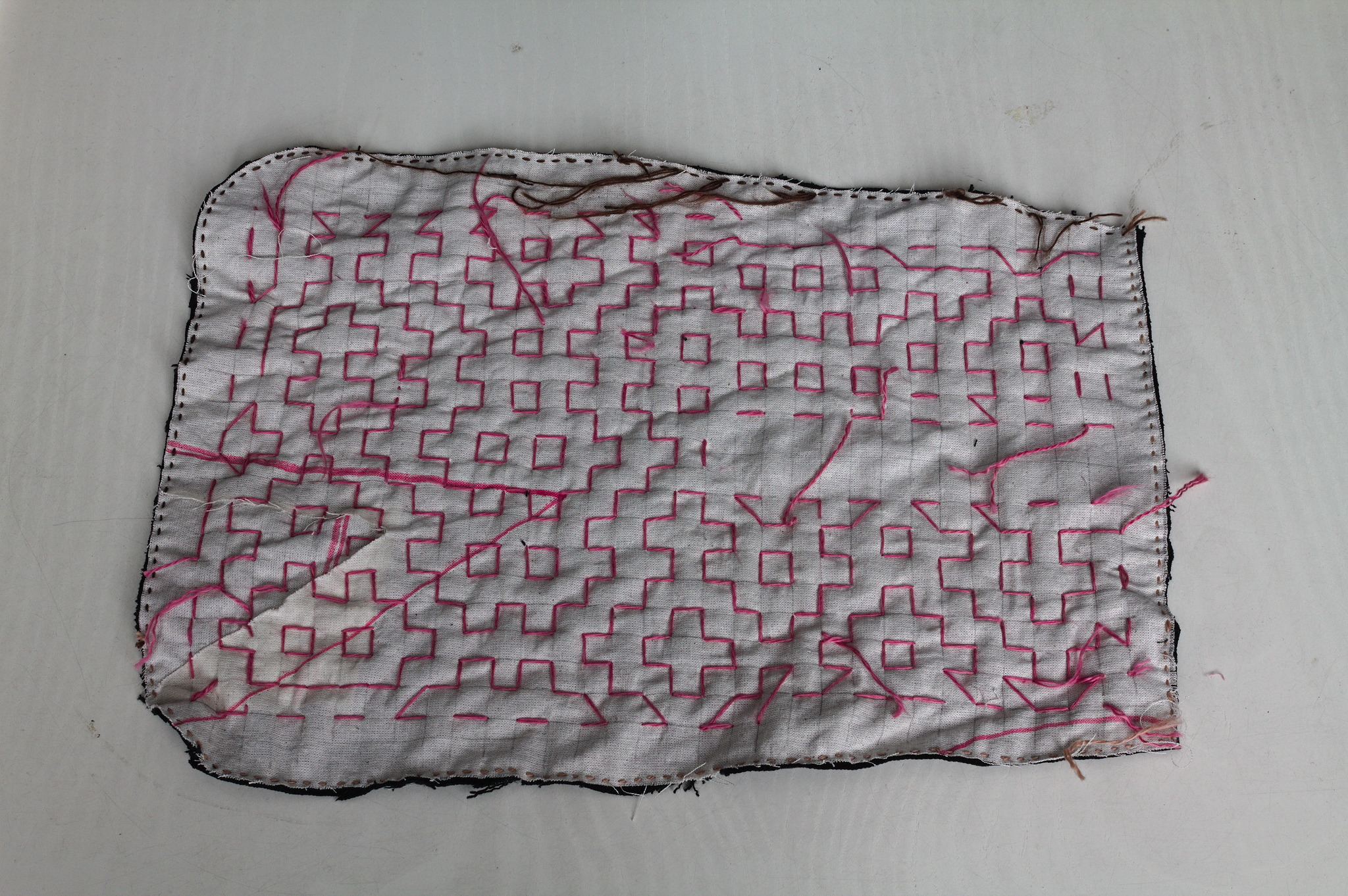

1.0.12 of the  Lately I ve seen people on the internet talking about victorian crazy

quilting. Years ago I had watched a

Lately I ve seen people on the internet talking about victorian crazy

quilting. Years ago I had watched a  I cut a

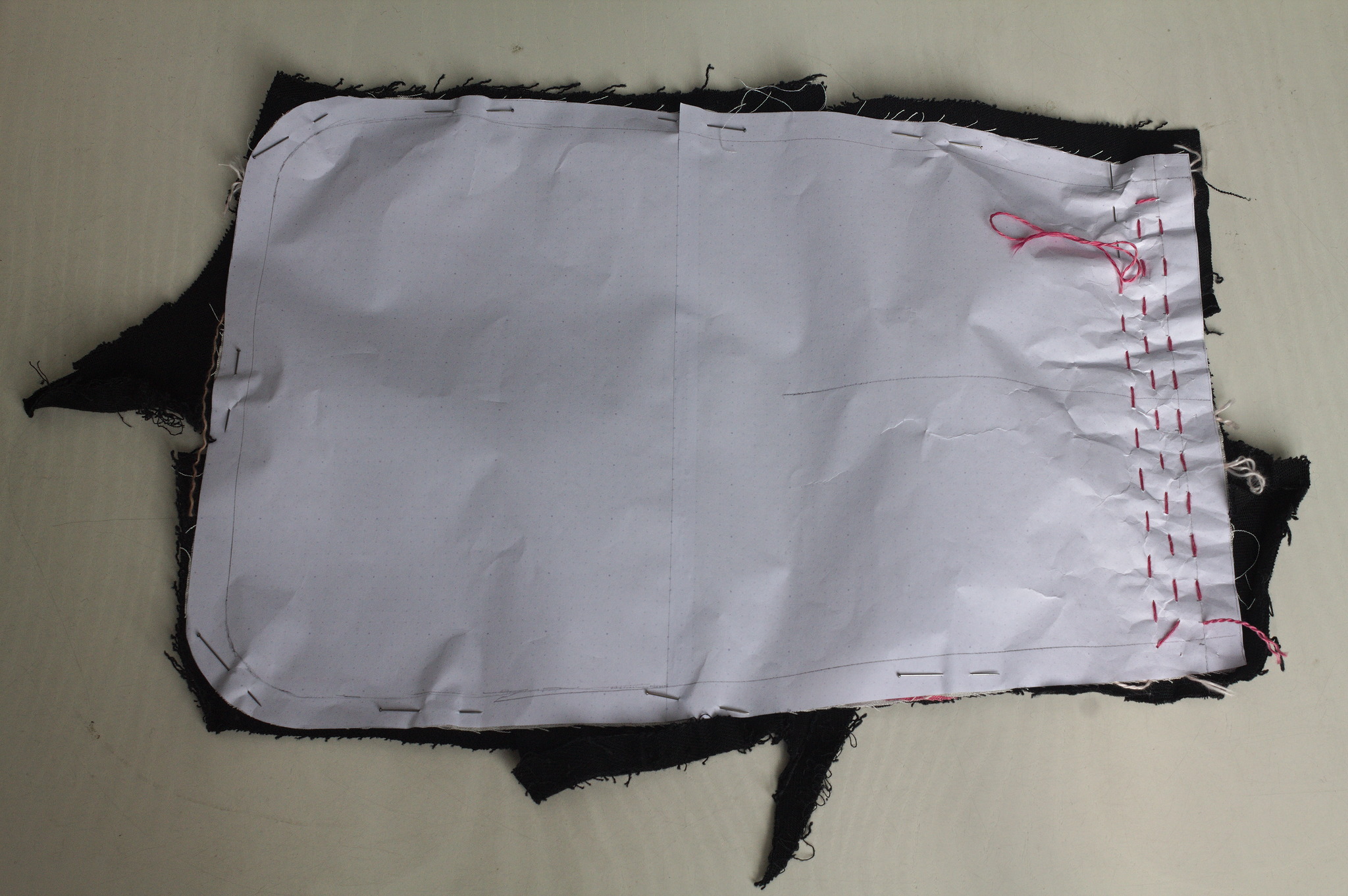

I cut a  For the second piece I tried to use a piece of paper with the square

grid instead of drawing it on the fabric: it worked, mostly, I would not

do it again as removing the paper was more of a hassle than drawing the

lines in the first place. I suspected it, but had to try it anyway.

For the second piece I tried to use a piece of paper with the square

grid instead of drawing it on the fabric: it worked, mostly, I would not

do it again as removing the paper was more of a hassle than drawing the

lines in the first place. I suspected it, but had to try it anyway.

Then I added a lining from some plain black cotton from the stash; for

the slit I put the lining on the front right sides together, sewn

at 2 mm from the marked slit, cut it, turned the lining to the back

side, pressed and then topstitched as close as possible to the slit from

the front.

Then I added a lining from some plain black cotton from the stash; for

the slit I put the lining on the front right sides together, sewn

at 2 mm from the marked slit, cut it, turned the lining to the back

side, pressed and then topstitched as close as possible to the slit from

the front.

I bound everything with bias tape, adding herringbone tape loops at the

top to hang it from a belt (such as one made from the waistband of one

of the donor pair of jeans) and that was it.

I bound everything with bias tape, adding herringbone tape loops at the

top to hang it from a belt (such as one made from the waistband of one

of the donor pair of jeans) and that was it.

I like the way the result feels; maybe it s a bit too stiff for a

pocket, but I can see it work very well for a bigger bag, and maybe even

a jacket or some other outer garment.

I like the way the result feels; maybe it s a bit too stiff for a

pocket, but I can see it work very well for a bigger bag, and maybe even

a jacket or some other outer garment.

I'd planned to write some private mail on the subject of preparing and

delivering conference talks. However, each time I try to write that mail,

I've managed to somehow contrive to lose it. So I thought I'd try as a

blog post instead, to break the curse.

The first aspect I wanted to write about is the pre-planning phase, or,

the bit where you decide to give a talk in the first place. But first a

bit about me.

I don't talk all that regularly. I think I'm averaging one talk a year.

I don't consider myself to be a natural talk-giver: I don't particularly

enjoy it and I still get quite nervous. So the first question is: why do

it?

One motivation is that you want to attend a particular conference, and

presenting at it makes it much easier to get institutional support for doing so

(i.e., travel and accommodation covered). At the moment, I've written some talk

proposals for

I'd planned to write some private mail on the subject of preparing and

delivering conference talks. However, each time I try to write that mail,

I've managed to somehow contrive to lose it. So I thought I'd try as a

blog post instead, to break the curse.

The first aspect I wanted to write about is the pre-planning phase, or,

the bit where you decide to give a talk in the first place. But first a

bit about me.

I don't talk all that regularly. I think I'm averaging one talk a year.

I don't consider myself to be a natural talk-giver: I don't particularly

enjoy it and I still get quite nervous. So the first question is: why do

it?

One motivation is that you want to attend a particular conference, and

presenting at it makes it much easier to get institutional support for doing so

(i.e., travel and accommodation covered). At the moment, I've written some talk

proposals for

Check it out

Check it out